IoT & Embedded Technology Blog

Enhancing IoT Security through Virtualization

In the early days of computing, hardware limited software’s potential. Low memory density, simple processors, and slow clock rates enforced constraints on the possible range of instructions and the number of operands available to the software programmer, limiting software complexity and flexibility. Fast forward through 50 years of steady technological progress, and software has gained almost unlimited potential due to cheap, powerful commodity computing hardware.

Virtualization is the latest step in unleashing software’s full power, by abstracting it further from the hardware that runs it. We define virtualization to be a software-defined resource that is typically intended to mimic or replace a physical, hardware-defined resource.

A basic example of consumer-facing virtualization is operating system partitioning – running multiple operating systems on one computer. Mac users may be familiar with BootCamp assistant, a built-in tool that allows a user to create a Windows partition so that they can run Windows-only applications.

Virtualization is huge in the enterprise IT World. VMWare, a leading provider of IT virtualization solutions, realized revenues of $1.5B in Q1 2015 (the last quarter reported before its acquisition by Dell), up 13% from the previous year. Amazon Web Services generated $2.4b in Q4 2015, up 69% YoY. Docker, a private virtualization company founded in 2010, does not release public revenue numbers, but is now valued at more than $1B.

In the enterprise IT market economies of scale dictate that a few large organizations own and update the hardware, leasing out virtualized portions of it on-demand. Customers eschew large up-front investments in rapidly-depreciating hardware while gaining the flexibility to deploy, reconfigure, and tear down virtual servers, desktops, and pre-packaged applications.

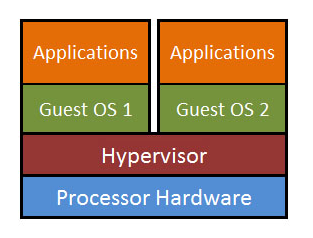

The benefits of virtualization come at the cost of system performance. A piece of software called a hypervisor sits on top of the hardware and creates the desired virtualized computing environments [See Figure 1]. This hypervisor must take up some of the processing power and memory capacity of the overall system in order to perform its virtualization functions. Therefore, it imposes a performance tax relative to a single operating system that runs directly on physical hardware.

Figure 1: Hypervisor software enables multiple operating systems on the same underlying hardware.

Embedded Virtualization

The embedded market faces different market pressures than the enterprise IT market. Embedded systems tend to feature real-time constraints, safety-critical performance requirements, and limited access to peripheral input/output devices.

Poor performance of some embedded systems (fighter jets, ballistic systems, HVAC systems, industrial robotics, automotive ECUs, electrical grid control software) could be extremely costly – sometimes even fatal.

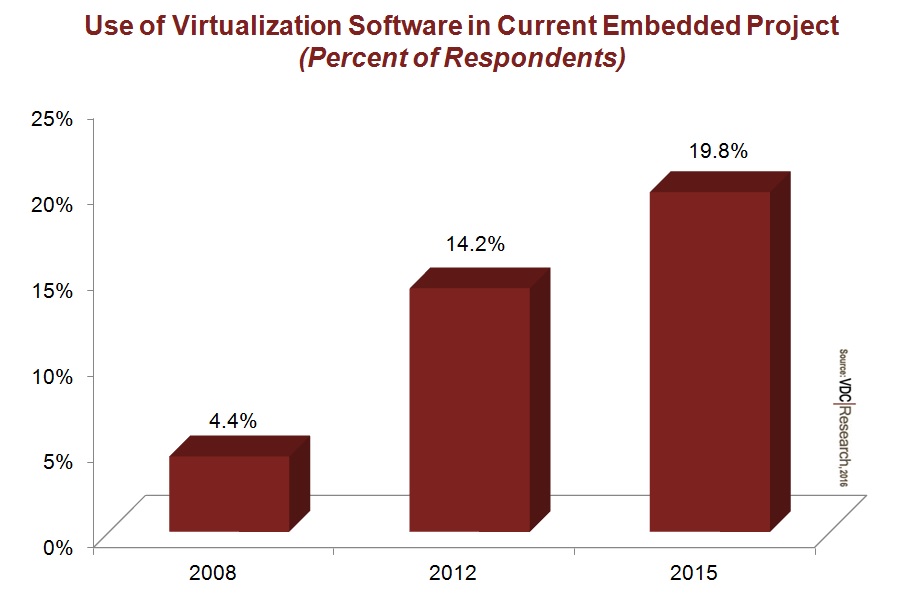

As noted previously, enabling virtualization requires a tax on performance. Thus, the adoption of virtualization technology in the embedded market has been muted and delayed relative to its use in the enterprise IT market. But the technology has gained considerable traction in the embedded market in recent years, increasing from 1 out of 20 embedded projects in 2008 to 1 out of 5 projects in 2015. Why?

Figure 2: Adoption of embedded virtualization increased fourfold over the past 7 years due to hardware advances and pressing security concerns.

IoT Driving Embedded Virtualization

There are two main forces driving the growth in embedded virtualization: hardware and the IoT.

The first force—hardware—is an enabler. Small sacrifices in performance due to hypervisor overhead are absorbed by regular, relatively cheap advances in processor power. One vendor estimates that its hypervisor produces only a 2% performance penalty for the underlying embedded system prior to any performance tuning. For more flexible embedded systems with shorter upgrade and development cycles, the small hit to performance is negligible when migrating to new hardware.

The second force—the IoT—is the real driver.

Along with the previously-mentioned benefits of virtualization, a properly-implemented hypervisor can also be used to securely partition critical, real-time operating systems from unsecured, general purpose operating systems. In other words, a hypervisor can keep core operating software safe while also allowing the system to interface with a huge threat vector – the Internet.

A properly-implemented hypervisor can also be used to securely partition critical, real-time operating systems from unsecured, general purpose operating systems.

A modern, connected car provides a good example of a system that can benefit greatly from a hypervisor. Virtualization technology allows safety- and performance- critical software (braking, steering, acceleration) and general purpose, connected software (music player, navigation, smartphone sync) to both run on the same processor hardware. Effectively partitioning the two is crucial for the integrity of the vehicle and the safety of the passengers.

With nearly one third of new vehicles shipping with some form of Internet connectivity in 2015 (and the portion quickly growing), usage of embedded virtualization technologies will increase in coming years.

How are regulatory agencies shaping the embedded virtualization landscape? How do embedded developers and vendors view the future of hypervisor technology and its adoption? What is the total size of the market opportunity, given the strong, global push towards connectivity? We discuss all of these points in our latest report: Hypervisors & Secure Operating Systems: Safety, Security, and Virtualization Converge in the IoT.