IoT & Embedded Technology Blog

Embedded & Edge AI Reinvigorates Hardware Market

AI has proliferated throughout many different environments, including the embedded space, which features unique deployment considerations and market opportunities. The leading vertical markets for embedded and edge AI hardware market are industrial automation and control, communications and networking, automotive, aerospace and defense, and retail automation, respectively. However, OEMs, systems integrators, industry solution builders, and service providers in nearly all industries are scrambling to add valuable AI capabilities to their offerings to allow for unprecedented edge application performance. As a result, the embedded and edge AI hardware market is extremely fragmented, supporting workloads running on low-power MCUs to deployments on commercial OEM servers with varying requirements for hardware acceleration, software resources, and development ecosystem.

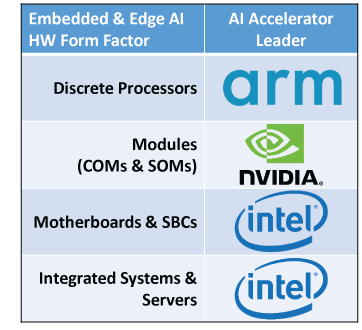

Unlike in the enterprise/cloud domain, a wide and increasing variety of hardware accelerators are in use to support AI workloads in embedded and edge applications. A major contributor is the use of general-purpose processors to execute relatively simple AI functions in initial deployments through the past several years; not all AI workloads demand optimized/dedicated silicon. As a result, the front-running semiconductor architectures for most embedded hardware form factors such as Arm and Intel established early leadership in AI. NVIDIA has been a unique powerhouse, expanding its focus on embedded and edge AI from its stronghold in the datacenter.

Unlike in the enterprise/cloud domain, a wide and increasing variety of hardware accelerators are in use to support AI workloads in embedded and edge applications. A major contributor is the use of general-purpose processors to execute relatively simple AI functions in initial deployments through the past several years; not all AI workloads demand optimized/dedicated silicon. As a result, the front-running semiconductor architectures for most embedded hardware form factors such as Arm and Intel established early leadership in AI. NVIDIA has been a unique powerhouse, expanding its focus on embedded and edge AI from its stronghold in the datacenter.

Without the right software support, AI hardware is dead on arrival to the commercial market. Over the past decade, software development has generally swelled in importance to accelerate time-to-market, generate competitive differentiation, and enable long lifecycle system support. AI is compounding traditional embedded software development requirements with new considerations for developer tools, AI framework/model support, and open source integrations. Embedded and edge AI hardware providers must offer more support for leading use cases, which in many cases today is augmenting established computer vision solutions. However, a wide variety of data inputs and types (e.g., audio, vibration, radar/LIDAR) are being fed to embedded and edge AI systems, requiring compliant and evolving software support.

Enabling and commoditizing AI across the broad embedded and edge space requires an ecosystem. While silicon technology and IP providers are extremely influential in terms of AI workload support, suppliers of embedded modules, boards, and integrated systems/servers are crucial to simplifying and accelerating adoption. They are particularly important for advancing AI in industries with strict requirements for size, performance, thermal management, power consumption, ruggedization, safety/standards compliance, and/or security. It will be critical for all of these hardware providers to continue expanding their “last mile” support and integrations for AI with application-focused offerings, reference designs, and services to fill any development gaps.

Embedded and edge AI is a relatively new, yet critical, layer of the system stack that can greatly extend established application support while also creating new competitive differentiation. Deploying AI beyond the cloud or datacenter environments, though, features a host of unique design considerations and deployment challenges that will reinforce that use of a variety of hardware accelerators and IP.

Nevertheless, the multi-billion-dollar embedded and edge AI hardware market is accelerating as nearly all types of organizations look to add this critical capability to their arsenal.

Learn more about VDC Research’s recently published report Embedded & Edge AI Hardware, click here.